In this post, I will talk about the first version of the Intermediate Representation I designed for Kunai Static Analyzer, this is a dalvik analysis library that I wrote as a project for my PhD, also as a way of learning about the Dalvik file format and improving my programming skills.

Authors

Writer:

Eduardo Blazquez

Technical and English reviewer:

Kunai Static Analyzer

Kunai was a static analysis library for dalvik bytecode. This library was published on Github, and it also was a paper published in the journal SoftwareX describing the projects and its benefits against another tool of the state of the art.

You can find the source code here: https://github.com/Fare9/KUNAI-static-analyzer

And the paper here: https://www.sciencedirect.com/science/article/pii/S2352711023000663

Although the project is discontinued (a new version is being written here Shuriken), I thought it would be interesting to write about my experience of how I wrote the first version of its Intermediate Representation (from now on IR), how I implemented the different algorithms, and how I transformed Dalvik bytecode into this IR.

As I said, this IR is the first version I wrote for Kunai, after that I decided to move my implementation to MLIR, an approach for writing specific IRs using a reusable and extensible compiler infrastructure. You can find my presentation of this new IR that I implemented, at EuroLLVM2023.

As I said, in this post I will dig into the process of creating an IR for supporting analysis of Dalvik Bytecode, the algorithms I followed and implemented, and finally the process for lifting the dalvik bytecode into this IR.

Please, grab a drink, play your favorite music (I recommend the following one if you want to chill: Dreamcatcher chill, or the next one if you prefer something with more rhythm: Dreamcatcher no ballad).

References

For the design and development of the IR I read chapters from different books, and I studied the code from different projects. Here you can find those books and projects:

Books

Advanced Compiler Design & Implementation

An Introduction to the Theory of Optimizing Compilers

Compilers Principle, Techniques & Tools

Introduction to Compilers and Language Design

SSA-based Compiler Design

Projects

MjolnIR - Kunai’s IR

My idea behind writing an IR for Kunai came from all the work I did during my PhD using Androguard. Many times using Androguard, I had to rely on the output of its disassembler and manually check the opcodes or even the mnemonic from the operation. While this process was easy for small analyses, it became hard for other projects. For example, I remember helping my friend Julien with one of his papers, at that moment we wanted to write a static taint analysis tool for Dalvik, and one of the ideas we had was to include a simple IR that would help us to recognize instructions in Androguard. It was at that moment when I saw the disadvantages of using Androguard, there was not a representation that could help us with the analysis (some time after that I discovered an Abstract Syntax Tree representation, but it is mostly used for the decompiler). After that, once I was writing Kunai, I thought that Kunai would benefit from an IR that would help analysts to write analyses with Kunai.

First of all, the most challenging decision choice was “what’s a cool name I can give it?”. Of course, a cool project needs a cool name. And because the project already had the name Kunai (a japanese farming tool popular for being the most used weapon in the manga/anime Naruto), the name of a weapon would be really cool as a name, and at that moment I remembered the following:

Mjölnir, the weapon of Thor, god of thunder in Norse Mythology. I had a cool name, and if I wrote it like MjolnIR, I could say that the last two characters come from Intermediate Representation.

Although the idea was good, my knowledge of the IRs was limited, and mostly based on using tools like Triton that gave me access to LLVM-IR code, or analyzing binaries using Ghidra and observing the generated P-code. Of course, I also remembered the little knowledge I gained in the Compiler’s class during the undergraduate bachelor in Computer Engineering. But I was willing to write the IR, and I had enough bibliography to start reading, as well as different projects to start learning from their code.

MjolnIR - Structure of an IR

Each Intermediate Representation or Intermediate Language can have its own shape, and own design. We can have that it is text-based or binary-based, or even a mix of both (for allowing modifications directly with a text-editor but fast processing with tools). We can have the next like Intermediate Representations (from the book Introduction to Compilers and Language Design) :

Abstract Syntax Tree (AST): although this representation is commonly used by the compiler’s front-end, it can be also used as an IR. Small simplifications are allowed in this representation. We can find that Triton uses a tree to represent the different expressions executed by its symbolic execution engine, and it is used for different translations like: expression tree to Z3 for expressions solving, or expression tree to LLVM IR. Being honest, in my first design I included some structures in the IR to allow a tree design, but I wasn’t completely sure about it, so I removed it from the design.

An Abstract Syntax Tree for a source code

A tree used by Triton to represent an expression

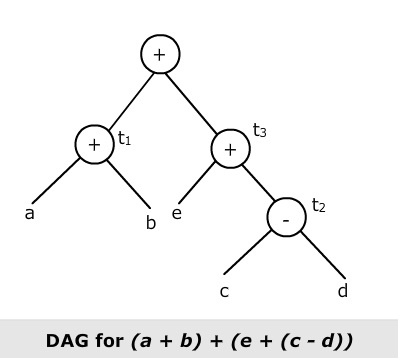

Directed Acyclic Graph (DAG): this is one step simplified from the AST, in a compiler this DAG can provide us with the type and value of each node, but in binary analysis, this information should be recovered. Probably it could be more useful for a compiler than for a binary analysis tool, since the information that would be needed to be recovered for using it would require a big effort.

A simple dag

Control-Flow Graph (CFG): this is a commonly used representation in binary analysis. A CFG is a graph in which blocks and edges represent the code and the control-flow of a function, in source code we can have that each block represents one statement from the source code, but in binary analysis commonly a block is a list of instructions (assembly or another intermediate language) with one entry point, and one exit point (a termination instruction, or an instruction to transfer the control of execution)

A Control-Flow Graph from a source code

A Control-Flow Graph from a binary

Static-Single Assignment (SSA): this representation form represents an intermediate language with one restriction, the variables can be assigned only once, so their value cannot change. In case we want to give a new value to a previously defined variable, we create a new variable but with another subindex. This makes algorithms like def-use trivial (we’ll see later in a part dedicated to the implemented algorithms). In some cases, we will need a special instruction called phi to create a new value that will hold a value depending on where the control-flow came from.

An Example of SSA IR

Linear IR: an intermediate representation that represents the instructions one after another as a sequence, which is similar to the assembly language. We have instructions to transfer values between memory and multiple registers etc. An example could be Dalvik’s bytecode, which is based on registers, and it is a kind of linear IR.

An Example of Dalvik Bytecode

Stack-Machine IR: In this case, we have a stack that is used to “store” values from the program, this kind of IR is designed to execute on a virtual stack machine. A good example of this one would be the bytecode of Java, which is stack-based.

An example of Java bytecode

A comparison between a stack and register bytecode

In general, my observation from the different books and projects is that an IR has a container for storing functions/methods from the programs. As I said, many of the IRs I have used so far use a Control-Flow Graph representation, so inside this container we have a block structure, and inside of the blocks a list of instructions.

The blocks in the CFG will have a single point of entry, and a single point of exit. The point of exit can be an instruction that represents a jump, a conditional jump or a switch statement. We can also find some instructions that have only a fallthrough (another instruction that doesn’t jump). Or in another case, we can find an exit block that ends with a return or throwing an exception.

Finally, we have the instructions inside the blocks. These instructions can represent a subset of real assembly instructions, and they can be used to represent all the semantics from a real machine. Of course, the complexity of these IR instructions will depend on the machines we want to represent. Mostly, we should have the following category of instructions:

Control-Flow instructions: instructions representing jumps, returns, switch, etc.

Data-Flow instructions: instructions to access memory for reading or writing to memory.

Operations: it wouldn’t be useful having access to memory data if we cannot work with that data, so here we have this category. The category would include binary operations, unary operations, comparisons, and assignments.

Values: we need some type of data to work with. Memory can have different representations, for example, a map of addresses. We can have registers, these registers can represent those from the machine, or we could have virtual registers. In an SSA representation, we have an infinite number of registers, and each register can be assigned a value only once. Register and memory can have an associated type, or at least an associated size.

In the next section, we will cover all these different instructions from the point of view of MjolnIR, and what are the instructions that I decided to implement with a schematic view of the instructions. Later I will present the implementation in C++ code of the types and some of the design decisions.

MjolnIR - Structure of MjolnIR

Although I had many examples, deciding how to create one IR is not something very straightforward or easy, so my best resource in this case was reading about Binary Ninja ILs, and also the book Advanced Compiler Design & Implementation, in both resources they talk about three-levels of representation, from a more low level syntax like assembly to higher level closer to source code (llil-mlil-hlil), and I thought that the Medium Level IL was a good design for what I wanted to do.

First of all, I thought I would need to represent a Method from Dalvik, and the best way to represent a method was using a control-flow graph, and this control-flow graph would have blocks.

Secondly, the blocks would have instructions, but we have different types of instructions, so let’s call all of them statements, and here we have condition-flow instructions, a nop instruction, and return instructions. But a fundamental one was the expressions, these expressions are the instructions that perform operations, we have assignments, we have arithmetic-logic instructions, comparisons, call to instructions, and memory usage. These expressions are applied over registers which have a type, so we also have types representing different things like registers, memory, constant ints, called methods, classes and even temporal registers.

I joined all of these, and I ended up having a type of tree structure, I represented that structure using a (probably not really well) Backus-Naur form (BNF). In the next section, I will show the BNFs that represent all the statements from the IR, I will also talk about the different instructions that are part of that BNF and what I represent with them.

Backus-Naur forms

IRStmnt

At the top of the IR we have the statements, these will be every instruction from the IR that could be executed by the program, between these IRStmnt are the expressions (explained later), but more specific statements are those that change the Control-Flow Graph from the function/method, these are conditional and unconditional jumps, return statements, etc.

IRStmnt --> IRUJmp | IRCJmp | IRRet | IRBlock | IRNop | IRSwitch | IRExpr IRUJmp --> jmp addr IRCJmp --> if (IRStmnt) { jmp addr } NEXT fallthrough_addr IRRet --> Ret IRStmnt IRBlock --> IRStmnt1, IRStmnt2, ..., IRStmntN IRSwitch --> switch (IRStmnt) { case X1 -> addr, case X2 -> addr, case XN -> addr }

All the instructions that are in a block are statements. Even the Blocks are statements. As I previously said, an IR needs Control-Flow instructions, these kinds of instructions would be:

Unconditional jumps that specify the address where they jump.

Conditional jumps that takes a comparison a statement and the address where to jump to in the case where the condition is met.

Return instruction that can specify a returned value.

Blocks, in my design blocks are statements too, and they contain a list of instructions.

Switch, like conditional jumps but these take an expression for the value to check and lookup various cases of addresses where to jump to.

Expressions, expressions are a special type of statements that will be subdivided into other instructions, we will see it in the next section.

IRExpr

The IR requires to support various instructions from the code, these are what we call IRExpr, these kinds of instructions don’t modify the control flow of the method but apply different kinds of operations to the variables/registers/memory in the program. They can affect the call-graph of a program, since one of the expressions allows calling other methods.

IRExpr --> IRBinOp | IRUnaryOp | IRAssign | IRPhi | IRCall | IRLoad | IRStore | IRZComp | IRBComp | IRNew | IRAlloca | IRType IRBinOp --> IRExpr <- IRExpr bin_op_t IRExpr IRUnaryOp --> IRExpr <- unary_op_t IRExpr IRAssign --> IRExpr <- IRExpr IRPhi --> IRExpr <- IRExpr, IRExpr, ..., IRExpr IRCall --> IRExpr(IRExpr1, IRExpr2, ..., IRExprN) IRLoad --> IRExpr <- *IRExpr IRStore --> *IRExpr <- IRExpr IRZComp --> IRExpr zero_comp_t IRBComp --> IRExpr comp_t IRExpr IRNew --> IRExpr <- new IRExpr IRAlloca --> IRExpr <- new IRExpr[IRExpr] # kind of operations bin_op_t --> ADD_OP_T | SUB_OP_T | S_MUL_OP_T | U_MUL_OP_T | S_DIV_OP_T | U_DIV_OP_T | MOD_OP_T unary_op_t --> INC_OP_T | DEC_OP_T | NOT_OP_T | NEG_OP_T | CAST_OP_T | Z_EXT_OP_T | S_EXT_OP_T zero_comp_t --> EQUAL_ZERO_T | NOT_EQUAL_ZERO_T comp_t --> EQUAL_T | NOT_EQUAL_T | GREATER_T | GREATER_EQUAL_T | LOWER_T | ABOVE_T | ABOVE_EQUAL_T | BELOW_T

Here we have the second type of instructions, we have operations that work with data, loading and storing data in memory, assigning values to registers, doing comparisons and memory allocations, etc.

Binary operations: those expressions that have two operands and store the result in a register/temporal register. We have different binary operations: add operation, sub operation, signed and unsigned multiplication and division, and finally mod operation.

Unary operations: expressions that only use one operand, the result is stored in a register/temporal register. We have different unary operations: increment, decrement, not, neg, casting to a different type, zero extension and sign extension.

Assignment operation: assign a value from one register/temporal register to another.

PHI instruction: this instruction is used in IRs to represent the same value coming from different blocks. The Phi instruction has as many operands as predecessor blocks. Conceptually, Phi returns a value depending on which block is executed before the phi instruction.

Example of a PHI instruction.

Call instruction: this instruction has a Callee that is called, it gets a variable number of operands, and it optionally returns a value.

Load and Store operations: used to load and store values from memory. In the case of Android, here we would have access to the class fields.

Zero comparison operation: a comparison with a zero value, to know if the provided expression is equals or not equal to zero. The value returned by the operation is commonly used in a conditional jump.

Binary comparison operation: this operation receives two expressions and a type of comparison, the available comparisons are equal, not equal, greater, greater-equal, lower, above, above-equal and below (for signed and unsigned comparisons). The value returned by the operation is commonly used in a conditional jump.

New operation: used to initialize a new object.

Alloca operation: used to initialize arrays with a specific size.

Type: this is an especial expression that represents the different types available for the IR.

IRType

For supporting the types we find in the binary code, we have written a series of classes which derive from a superclass named IRType, as derived classes we have: registers, constant values, strings, memory, callee types.

IRType --> IRReg | IRTempReg | IRConstInt | IRMemory | IRString | IRCallee | IRField | IRClass | IRFundamental | NONE IRFundamental --> F_BOOLEAN | F_BYTE | F_CHAR | F_DOUBLE | F_FLOAT | F_INT | F_LONG | F_SHORT | F_VOID

Finally, we have values that are used in the expressions and also in the statements. Some IRs call some of these Values , others have registers , etc. In case of MjolnIR, I included registers that represent the registers from Dalvik, and also temporal registers that are used as results for some of the expression operations. Now we will list all of them with a description:

Registers: used to represent the registers from Dalvik.

Temporal registers: used as an intermediate storage for operations in the expressions.

Constant Integers: integer values used, for example, in binary operations or comparisons.

Memory: addresses of memory, including an accessed offset.

String: a constant string value.

Callee: a method called by the call instruction from the expressions.

Field: a field from a Java-like language, the field contains information like the class it belongs, the name of the field, the type, etc.

Class: a Java-like language class, with the name of the class.

Fundamental: it represents the fundamental values available in a Java-like language.

Summary of the IR instructions

Next I show a summary of the IR:

Structure of MjolnIR.

In this image, I include all the statements from the IR, together with that in the bottom of the image we have what will be later explained, the lifter from Dalvik to MjolnIR. The lifter generate IRGraphs that contains IRBlocks which contain a list of IRStmnt s. Finally, from an IRGraph , an SSA form can be obtained, I will explain the algorithm used later. As part of the original idea, an optimizer was going to be written.

Implementation

The idea behind this post is not only providing a theoretical view like you can see in a compiler’s book, but also to provide a real life implementation of such an IR, and an explanation of it’s implementation. After explaining each one of the instructions, I will explain the different algorithms used for manipulating the CFG.

One clarification before starting, from now on I will use the following notation, I write the name of the classes using uppercase for the first character of the name, and in case of the IR all the classes start with IR followed by the name of the component that it represents (for example IRStmnt ). Most of these classes have their version as a smart pointer, the name of the smart pointer type is the same as its class but in lowercase and followed by _t (for example IRBlock -> irblock_t ). For the name of the variables and the name of the functions, I use snake case.

IRGraph Implementation

Although previously I wrote the BNF from IRGraph , here I will explain the implementation from the class.

First of all, for the graph we will change the notation and instead of having blocks or a pair of blocks, we will have nodes and edges. In C++ we will do this with using to give another name to the data types:

using Nodes = std :: vector < irblock_t > ; using Edge = std :: pair < irblock_t , irblock_t > ; using Edges = std :: vector < Edge > ; using Paths = std :: vector < std :: vector < irblock_t >> ;

Here we can see that the nodes are a vector of blocks, and the edges are a pair of blocks, and edges just a vector of edge. Finally, for some algorithms we will use Paths, which are a list of blocks.

Now we can move to see the implementation of a graph. I will not paste the implementation of the functions as I will explain some implementations later in depth, and also the whole code will be included in a repository with all the code.

class IRGraph { IRGraph (); ~ IRGraph () = default ; bool add_node ( irblock_t node ); void add_edge ( irblock_t src , irblock_t dst ); void add_uniq_edge ( irblock_t src , irblock_t dst ); void add_block_to_sucessors ( irblock_t node , irblock_t successor ); void add_block_to_predecessors ( irblock_t node , irblock_t predecessor ); Nodes & get_nodes (); Edges & get_edges (); std :: optional < irblock_t > get_node_by_start_idx ( std :: uint64_t idx ); void merge_graph ( irgraph_t graph ); void del_edge ( irblock_t src , irblock_t dst ); void del_node ( irblock_t node ); void delete_block_from_sucessors ( irblock_t node , irblock_t block ); void delete_block_from_precessors ( irblock_t node , irblock_t block ); std :: vector < irblock_t > get_leaves (); std :: vector < irblock_t > get_heads (); Paths find_path ( irblock_t src , irblock_t dst , size_t cycles_count , std :: map < irblock_t , size_t > done ); Paths find_path_from_src ( irblock_t src , irblock_t dst , size_t cycles_count , std :: map < irblock_t , size_t > done ); Nodes reachable_sons ( irblock_t head ); Nodes reachable_parents ( irblock_t leaf ); std :: map < irblock_t , Nodes > compute_dominators ( irblock_t head ); std :: map < irblock_t , Nodes > compute_postdominators ( irblock_t leaf ); std :: map < irblock_t , irblock_t > compute_immediate_dominators (); std :: map < irblock_t , irblock_t > compute_immediate_postdominators (); std :: map < irblock_t , std :: set < irblock_t >> compute_dominance_frontier (); irgraph_t copy (); // node information size_t get_number_of_successors ( irblock_t node ); Nodes & get_successors ( irblock_t node ); size_t get_number_of_predecessors ( irblock_t node ); Nodes & get_predecessors ( irblock_t node ); node_type_t get_type_of_node ( irblock_t node ); // algorithms from Advanced Compiler Design and Implementation Nodes reachable_nodes_forward ( irblock_t head ); Nodes reachable_nodes_backward ( irblock_t leaf ); Nodes build_ebb ( irblock_t r ); Nodes Depth_First_Search ( irblock_t head ); Nodes Breadth_First_Search ( irblock_t head ); void generate_dot_file ( std :: string name ); void generate_dominator_tree ( std :: string name ); const std :: uint64_t get_cyclomatic_complexity (); void set_last_temporal ( std :: uint64_t last_temporal ); std :: uint64_t get_last_temporal (); private: Nodes nodes ; Edges edges ; std :: map < irblock_t , Nodes > successors ; std :: map < irblock_t , Nodes > predecessors ; std :: uint64_t cyclomatic_complexity = - 1 ; std :: uint64_t last_temporal ; }

As we can see, the graph contains the nodes which are a list of blocks, and the edges which are pairs of nodes (blocks connected through the control-flow). For each node, we store a list of successors and predecessors. And finally, some auxiliary variables, like the cyclomatic complexity of that function, and a variable that stores the last assigned temporal register.

For the graph, we have common utilities for adding blocks, removing them, connecting those blocks through edges, etc. But also other utilities not so common like: get_leaves to get nodes without successors, get_heads to get nodes without predecessors, find_path to find a connection between two blocks in the graph searching backwards, find_path_from_src to find a connection between two blocks in the graph searching forward, reachable_* that is used to get sons or parents, and others that implement common algorithms in graphs. For the last algorithms, I will explain them in-depth discussing the implementation code and the algorithm used.

IRBlock implementation

Now we can see the code from the basic blocks:

class IRBlock { IRBlock (); ~ IRBlock () = default ; void add_statement_at_beginning ( irstmnt_t statement ); void append_statement_to_block ( irstmnt_t statement ); bool delete_statement_by_position ( size_t pos ); size_t get_number_of_statements (); std :: vector < irstmnt_t > & get_statements (); void set_start_idx ( uint64_t start_idx ); void set_end_idx ( uint64_t end_idx ); uint64_t get_start_idx (); uint64_t get_end_idx (); std :: string get_name (); std :: string to_string (); void set_phi_node (); void clear_phi_node (); bool contains_phi_node () private: bool phi_node = false ; //! starting idx and last idx (used for jump calculation) uint64_t start_idx , end_idx ; //! statements from the basic block. std :: vector < irstmnt_t > block_statements ; }

The basic blocks do not contain much code, they just keep the first and the last index of their instructions, and information to know if a phi instruction exists in the block (for an explanation about the Phi instructions you can go to BNF section). Finally, the block contains a list of instructions belonging to that block. The included functions are just utilities for inserting instructions, deleting instructions, etc.

IRStmnt implementation

Now, we can see the implementation of the statements, as I previously said, the statements are the root of all the instructions (including the basic blocks). The statement class store also the enum with the type of the statements:

enum stmnt_type_t { UJMP_STMNT_T , CJMP_STMNT_T , RET_STMNT_T , NOP_STMNT_T , SWITCH_STMNT_T , EXPR_STMNT_T , NONE_STMNT_T = 99 // used to finish the chain of statements };

Also, we have an enum for all the operations, I included this enum as part of IRStmnt class too, so we can check which operation we are working with if we have an IRStmnt .

enum op_type_t { UJMP_OP_T , CJMP_OP_T , RET_OP_T , NOP_OP_T , SWITCH_OP_T , EXPR_OP_T , BINOP_OP_T , UNARYOP_OP_T , ASSIGN_OP_T , PHI_OP_T , CALL_OP_T , OP_T_OP_T , LOAD_OP_T , STORE_OP_T , ZCOMP_OP_T , BCOMP_OP_T , NEW_OP_T , ALLOCA_OP_T , TYPE_OP_T , REGISTER_OP_T , TEMP_REGISTER_OP_T , CONST_INT_OP_T , CONST_FLOAT_OP_T , FIELD_OP_T , MEM_OP_T , STRING_OP_T , CLASS_OP_T , CALLEE_OP_T , FUNDAMENTAL_OP_T , NONE_OP_T = 99 // used to finish the chain of statements };

Having IRStmnt as the top of the classes allows to provide it as a value for many parameters, or for variables, then we can cast the pointers in order to work with different classes. Maybe using classes and inheritance is not the best way to generate the IR instructions, but it was the one I had in mind when I thought about providing statements as part of the other instructions.

Now we will see the IRStmnt class:

class IRStmnt { public: IRStmnt ( stmnt_type_t stmnt_type , op_type_t op_type ); virtual ~ IRStmnt () = default ; stmnt_type_t get_statement_type (); op_type_t get_op_type (); std :: string to_string (); const std :: vector < irstmnt_t > & get_use_def_chain () const ; const std :: unordered_map < irexpr_t , std :: vector < irstmnt_t >> & get_def_use_chains () const ; std :: optional < std :: vector < irstmnt_t > *> get_def_use_chain_by_value ( irexpr_t value ); void add_instr_to_use_def_chain ( irstmnt_t instr ); void add_instr_to_def_use_chain ( irexpr_t var , irstmnt_t instr ); void invalidate_chains (); void invalidate_use_def_chain (); void invalidate_def_use_chains (); void print_use_def_and_def_use_chain (); private: //! Type of the statement. stmnt_type_t stmnt_type ; //! Op type op_type_t op_type ; //! Vectors used for use-def and def-use chains std :: vector < irstmnt_t > use_def_chain ; std :: unordered_map < irexpr_t , std :: vector < irstmnt_t >> def_use_chains ; };

This code represents the base of all the other instructions. As we can see, we have a couple of getters to know what kind the statement is, or what kind of operation it is. And then one of the most important parts. MjolnIR implemented both def-use and use-def chains, these are two lists that for each variable (register), it provides where the register is defined, and where it is used. Later I will provide a better description and the implementation of these algorithms. We have getters, setters and also functions to invalidate those chains.

IRUJmp implementation

Let’s see the implementation of an unconditional jump:

class IRUJmp : public IRStmnt { public: IRUJmp ( uint64_t addr , irblock_t target ); ~ IRUJmp () = default ; void set_jump_target ( irblock_t target ); irblock_t get_jump_target (); uint64_t get_jump_addr (); std :: string to_string (); private: //! offset or address of target uint64_t addr ; //! target where the jump will fall irblock_t target ; };

The most important part of this instruction in the way I implemented it is the target . With this target , given a basic block, we can know where the block will jump.

IRCJmp implementation

Implementation of the conditional jump:

class IRCJmp : public IRStmnt { public: IRCJmp ( uint64_t addr , irstmnt_t condition , irblock_t target , irblock_t fallthrough ); ~ IRCJmp () = default ; uint64_t get_addr (); irstmnt_t get_condition (); void set_jump_target ( irblock_t target ); irblock_t get_jump_target (); void set_fallthrough_Target ( irblock_t fallthrough ); irblock_t get_fallthrough_target (); std :: string to_string ();; private: //! offset or address of target uint64_t addr ; //! Condition for taking the target jump irstmnt_t condition ; //! target where the jump will fall irblock_t target ; //! fallthrough target. irblock_t fallthrough ; };

The implementation is similar to IRUJmp , but in this case we need two targets, one taken if the condition matches, and another for the fallthrough target. The condition is provided as an IRStmnt so any expression or type can be given as a condition (probably in a better implementation, that condition should be restricted to a few types or expressions).

IRRet implementation

class IRRet : public IRStmnt { public: IRRet ( irstmnt_t ret_value ); ~ IRRet () = default ; irstmnt_t get_return_value (); std :: string to_string (); private: //! Returned value, commonly a NONE IRType, or an IRReg. irstmnt_t ret_value ; };

A return instruction can optionally have a return value. A return instruction creates a leaf node, these are nodes that terminate the graph.

IRNop implementation

The nop instruction doesn’t receive any parameter, and can be used to create empty blocks (blocks that do nothing). So its implementation is pretty simple:

class IRRet : public IRStmnt { public: IRRet ( irstmnt_t ret_value ); ~ IRRet () = default ; irstmnt_t get_return_value (); std :: string to_string (); private: //! Returned value, commonly a NONE IRType, or an IRReg. irstmnt_t ret_value ; };

IRSwitch implementation

The last type of jump is the switch instruction; switch allows given an expression, jumps to different blocks.

class IRSwitch : public IRStmnt { public: IRSwitch ( std :: vector < int32_t > offsets , irexpr_t condition , std :: vector < int32_t > constants_checks ); ~ IRSwitch () = default ; const std :: vector < int32_t > & get_offsets () const ; irexpr_t get_condition (); const std :: vector < int32_t > & get_constants_checks () const ; std :: string to_string (); private: //! switch offsets where instruction will jump. std :: vector < int32_t > offsets ; //! condition taken to decide where to jump irexpr_t condition ; //! conditions checked during switch. std :: vector < int32_t > constants_checks ; };

We have a list of offsets and a list of checks for the case blocks from the switch statement. For the condition value, we have an expression (commonly a temporal register).

IRExpr implementation

Although the IRExpr instruction does not have itself a long codebase, it is the base class for all the expressions in MjolnIR, and it contains an enum to specify the type of expression we are working with:

enum expr_type_t { BINOP_EXPR_T , UNARYOP_EXPR_T , ASSIGN_EXPR_T , PHI_EXPR_T , CALL_EXPR_T , TYPE_EXPR_T , LOAD_EXPR_T , STORE_EXPR_T , ZCOMP_EXPR_T , BCOMP_EXPR_T , NEW_EXPR_T , ALLOCA_EXPR_T , NONE_EXPR_T = 99 // used to finish the expressions };

Finally, the code from the IRExpr class, contains the code that other expressions will need to create:

class IRExpr : public IRStmnt { public: IRExpr ( expr_type_t type , op_type_t op_type ); ~ IRExpr () = default ; expr_type_t get_expression_type (); std :: string to_string (); bool equals ( irexpr_t irexpr ); friend bool operator == ( IRExpr & , IRExpr & ); private: //! ir expression as string std :: string ir_expr_str ; //! expression type expr_type_t type ; };

IRBinOp implementation

One of the most important operations are binary operations, we have different mathematical operations we do with two operators, and then we store the result in another expression (commonly a register or a temporal register). This class is also the enum of the binary operations:

enum bin_op_t { // common arithmetic instructions ADD_OP_T , SUB_OP_T , S_MUL_OP_T , U_MUL_OP_T , S_DIV_OP_T , U_DIV_OP_T , MOD_OP_T , // common logic instructions AND_OP_T , XOR_OP_T , OR_OP_T , SHL_OP_T , SHR_OP_T , USHR_OP_T , };

The implementation includes the two operands as expressions, and finally another expression as the place where to store the result

class IRBinOp : public IRExpr { public: IRBinOp ( bin_op_t bin_op_type , irexpr_t result , irexpr_t op1 , irexpr_t op2 ); ~ IRBinOp () = default ; bin_op_t get_bin_op_type (); irexpr_t get_result (); irexpr_t get_op1 (); irexpr_t get_op2 (); std :: string to_string (); bool equals ( irbinop_t irbinop ); friend bool operator == ( IRBinOp & , IRBinOp & ); private: //! type of binary operation bin_op_t bin_op_type ; //! IRBinOp => IRExpr(result) = IRExpr(op1) <binop> IRExpr(op2) //! for the result we will have an IRExpr too. irexpr_t result ; //! now each one of the operators irexpr_t op1 ; irexpr_t op2 ; };

IRUnaryOp implementation

Similar as the previous operation, we have types of unary operations:

enum unary_op_t { NONE_UNARY_OP_T , INC_OP_T , DEC_OP_T , NOT_OP_T , NEG_OP_T , CAST_OP_T , // maybe not used in binary Z_EXT_OP_T , // zero extend S_EXT_OP_T // sign extend };

And because we have a cast operation, we have different casting operation enum values:

enum cast_type_t { NONE_CAST , TO_BYTE , TO_CHAR , TO_SHORT , TO_INT , TO_LONG , TO_FLOAT , TO_DOUBLE , TO_ADDR , TO_BOOLEAN , TO_CLASS , TO_VOID , TO_ARRAY , };

As part of the implementation, we have a normal constructor that is used to initialize the unary operations, and in case of cast operation, we have another constructor that also initializes the type of cast operation. Also, if the cast is to a class, we can specify the name of the class we are casting too:

class IRUnaryOp : public IRExpr { public: IRUnaryOp ( unary_op_t unary_op_type , irexpr_t result , irexpr_t op ); /** * @param cast_type: instruction is cast, specify type of cast. */ IRUnaryOp ( unary_op_t unary_op_type , cast_type_t cast_type , irexpr_t result , irexpr_t op ); /** * @param cast_type: instruction is cast, specify type of cast. * @param class_name: if cast is TO_CLASS, specify the name of the class. */ IRUnaryOp ( unary_op_t unary_op_type , cast_type_t cast_type , std :: string class_name , irexpr_t result , irexpr_t op ); ~ IRUnaryOp () = default ; unary_op_t get_unary_op_type (); irexpr_t get_result (); irexpr_t get_op (); void set_cast_type ( cast_type_t cast_type ); cast_type_t get_cast_type (); std :: string get_class_cast (); std :: string to_string (); bool equals ( irunaryop_t irunaryop ); friend bool operator == ( IRUnaryOp & , IRUnaryOp & ); private: //! type of unary operation unary_op_t unary_op_type ; //! used for casting operations cast_type_t cast_type ; //! Class casted to std :: string class_name ; //! IRUnaryOp => IRExpr(result) = <unaryop> IRExpr(op) //! an IRExpr for where the result is stored. irexpr_t result ; // operator irexpr_t op ; };

The operand and the result are two expressions (commonly are registers or temporal registers).

IRAssign implementation

The implementation of this operation is similar to the previous one, since we have only one operand and one result. But conceptually it is very different because this operation is mostly used to move values between registers (registers/temporal registers). So instead of operand and result, we have source and destination. This instruction shouldn’t be used to move from or to memory, since we already have load and store for that.

class IRAssign : public IRExpr { public: IRAssign ( irexpr_t destination , irexpr_t source ); ~ IRAssign () = default ; irexpr_t get_destination (); irexpr_t get_source (); std :: string to_string (); bool equals ( irassign_t irassign ); friend bool operator == ( IRAssign & , IRAssign & ); private: //! destination where the value will be stored. irexpr_t destination ; //! source expression from where the value is taken irexpr_t source ; };

IRPhi implementation

This instruction is a special instruction in an IR. An IR does not execute the code, but it represents the code. We have cases where the same variable can come from different paths, and depending on the path where the variable comes, its value is one or another. An example with source code would be like so:

#include <iostream> int absolute ( int x ) { if ( x < 0 ) { return - x ; } else { return x ; } } int main () { int value = - 42 ; int absValue = absolute ( value ); std :: cout << "Absolute value of " << value << " is " << absValue << std :: endl ; return 0 ; }

A simplified version of the IR, generated from absolute and generated with LLVM, would be like so:

define i32 @absolute ( i32 %x ) #0 { entry: %cmp = icmp slt i32 %x , 0 br i1 %cmp , label %if.then , label %if.end if.then: ; preds = %entry %neg = sub nsw i32 0 , %x br label %if.end if.end: ; preds = %if.then, %entry %retval.0 = phi i32 [ %x , %entry ], [ %neg , %if.then ] ret i32 %retval.0 }

We have a phi instruction that, depending on what the last executed incoming block was, the value of %retval.0 will be %x if the code came from %entry , or %neg if it came from %if.then .

My implementation includes a map, the map has as a key which identifies the block from where the variable comes from, and as value the expression with the variable (register/temporal register). Finally, we have another expression as result, which will be the final register which will contain the value that will be used from that block.

class IRPhi : public IRExpr { public: IRPhi (); ~ IRPhi () = default ; std :: unordered_map < uint32_t , irexpr_t > & get_params (); void add_param ( irexpr_t param , uint32_t id ); irexpr_t get_result (); void add_result ( irexpr_t result ); std :: string to_string (); bool equals ( irphi_t irphi ); friend bool operator == ( IRPhi & , IRPhi & ); private: irexpr_t result ; std :: unordered_map < uint32_t , irexpr_t > params ; };

IRCall implementation

The instruction IRCall was designed as a call to different types of functions. I implemented internal calls which are calls to functions in the binary, external calls which would be calls to library functions, and finally syscalls. The previous values are enum values in the code:

enum call_type_t { INTERNAL_CALL_T , // call to internal component EXTERNAL_CALL_T , // call to external library (example DLL, .so file, external component, etc) SYSCALL_T , // a syscall type NONE_CALL_T = 99 , // Not specified };

The instruction stores as an IRExpr a callee, later we will see a type IRCallee that implements these functions for Java-type languages. Then the instruction also contains a vector for the parameters and an optional return value.

class IRCall : public IRExpr { public: IRCall ( irexpr_t callee , std :: vector < irexpr_t > args ); IRCall ( irexpr_t callee , call_type_t call_type , std :: vector < irexpr_t > args ); ~ IRCall () = default ; irexpr_t get_callee (); const std :: vector < irexpr_t > & get_args () const ; std :: string to_string (); void set_ret_val ( irexpr_t ret_val ); irexpr_t get_ret_val (); call_type_t get_call_type (); bool equals ( ircall_t ircall ); friend bool operator == ( IRCall & , IRCall & ); private: //! Type of call call_type_t call_type ; //! Type representing the function/method called irexpr_t callee ; //! Vector with possible arguments std :: vector < irexpr_t > args ; //! Return value (if it's for example a register) irexpr_t ret_val ; };

The return value is optional, since we can have void return values, and parameters can be an empty vector.

IRLoad & IRStore implementations

I will put together these instructions since they represent a same concept. These two instructions are used to operate with memory. In the IR I tried to follow the convention pcode , and I created these instructions to operate with memory, so instead of working with memory directly in the operations, memory values are loaded in registers or in temporal registers. After that, we can operate with those values. The operations contains a destination and a source value expressed as IRExpr but it also has a size value (the size of the loaded or stored value), and also an index in case the memory is accessed using some index.

class IRLoad : public IRExpr { public: /** * @brief Constructor of IRLoad class, this class represent a load from memory (using memory or using register). * @param destination: register where the value will be stored. * @param source: expression from where the memory will be retrieved. * @param size: loaded size. * @return void */ IRLoad ( irexpr_t destination , irexpr_t source , std :: uint32_t size ); /** * @brief Constructor of IRLoad class, this class represent a load from memory (using memory or using register). * @param destination: register where the value will be stored. * @param source: expression from where the memory will be retrieved. * @param index: index from the load if this is referenced with an index. * @param size: loaded size. * @return void */ IRLoad ( irexpr_t destination , irexpr_t source , irexpr_t index , std :: uint32_t size ); ~ IRLoad () = default ; irexpr_t get_destination (); irexpr_t get_source (); irexpr_t get_index (); std :: uint32_t get_size (); std :: string to_string (); bool equals ( irload_t irload ); friend bool operator == ( IRLoad & , IRLoad & ); private: //! Register where the memory pointed by a register will be loaded. irexpr_t destination ; //! Expression from where memory is read. irexpr_t source ; //! Index if this is referenced by for example a register. irexpr_t index ; //! Size of loaded value std :: uint32_t size ; }; class IRStore : public IRExpr { public: /** * @brief Constructor of IRStore class, this represent an store to memory instruction. * @param destination: Expression where value is written to. * @param source: register with the value to be stored. * @param size: size of the stored value. * @return void */ IRStore ( irexpr_t destination , irexpr_t source , std :: uint32_t size ); /** * @brief Constructor of IRStore class, this represent an store to memory instruction. * @param destination: Expression where value is written to. * @param source: register with the value to be stored. * @param index: index where value is stored. * @param size: size of the stored value. * @return void */ IRStore ( irexpr_t destination , irexpr_t source , irexpr_t index , std :: uint32_t size ); ~ IRStore () = default ; irexpr_t get_destination (); irexpr_t get_source (); irexpr_t get_index (); std :: uint32_t get_size (); std :: string to_string (); bool equals ( irstore_t irstore ); friend bool operator == ( IRStore & , IRStore & ); private: //! Memory pointed by register where value will be stored. irexpr_t destination ; //! Expression with source of value to be stored. irexpr_t source ; //! Index if this is referenced by for example a register. irexpr_t index ; //! Size of stored value std :: uint32_t size ; };

IRZComp implementation

Since this IR was mainly implemented for Java-like languages, I implemented an instruction to compare with a zero value. There are different enum values that will tell the type of comparator:

enum zero_comp_t { EQUAL_ZERO_T , // == NOT_EQUAL_ZERO_T , // != LOWER_ZERO_T , // < GREATER_EQUAL_ZERO , // >= GREATER_ZERO_T , // > LOWER_EQUAL_ZERO // <= };

Then the instruction contains a register for the compared operand, and a register for storing the result. In both cases the values are provided as IRExpr :

class IRZComp : public IRExpr { public: /** * @brief Constructor of IRZComp, this is a comparison with zero. * @param comp: type of comparison (== or !=). * @param result: register or temporal register where result is stored. * @param reg: register used in the comparison. * @return void */ IRZComp ( zero_comp_t comp , irexpr_t result , irexpr_t reg ); ~ IRZComp () = default ; irexpr_t get_result (); irexpr_t get_reg (); zero_comp_t get_comparison (); std :: string to_string (); bool equals ( irzcomp_t irzcomp ); friend bool operator == ( IRZComp & , IRZComp & ); private: //! Register where result is stored irexpr_t result ; //! Register for comparison with zero. irexpr_t reg ; //! Type of comparison zero_comp_t comp ; };

IRBComp implementation

In the case, we do not compare with zero, but between two registers, we use this instruction. Again we have different types of comparisons:

enum comp_t { EQUAL_T , // == NOT_EQUAL_T , // != GREATER_T , // > GREATER_EQUAL_T , // >= LOWER_T , // < LOWER_EQUAL_T , // <= ABOVE_T , // (unsigned) > ABOVE_EQUAL_T , // (unsigned) >= BELOW_T , // (unsigned) < };

The instruction implementation is almost the same as the previous one, but this time with two registers as operators:

class IRBComp : public IRExpr { public: /** * @brief Constructor of IRBComp, this class represent a comparison between two types. * @param comp: type of comparison from an enum. * @param result: register or temporal register where result is stored. * @param reg1: first type where the comparison is applied. * @param reg2: second type where the comparison is applied. * @return void */ IRBComp ( comp_t comp , irexpr_t result , irexpr_t reg1 , irexpr_t reg2 ); ~ IRBComp () = default ; irexpr_t get_result (); irexpr_t get_reg1 (); irexpr_t get_reg2 (); comp_t get_comparison (); std :: string to_string (); bool equals ( irbcomp_t bcomp ); friend bool operator == ( IRBComp & , IRBComp & ); private: //! register or temporal register where result is stored irexpr_t result ; //! registers used in the comparisons. irexpr_t reg1 ; irexpr_t reg2 ; //! Type of comparison comp_t comp ; };

IRNew implementation

Java-type languages contain a new instruction for allocating memory for a new object. This instruction contains a result value (which will be a register) and a class instance that will be the type of the new object. We will later see in types the IRClass :

class IRNew : public IRExpr { public: /** * @brief Construct a new IRNew::IRNew object which represents * the creation of an instance of a class. * * @param result: result register where object is stored. * @param class_instance: IRClass object which represent the instance. * @return void */ IRNew ( irexpr_t result , irexpr_t class_instance ); ~ IRNew () = default ; irexpr_t get_result (); irexpr_t get_source_class (); std :: string to_string (); bool equals ( irnew_t new_i ); friend bool operator == ( IRNew & , IRNew & ); private: //! register where the result will be stored. irexpr_t result ; //! class type which will create a new instance. irexpr_t class_instance ; };

IRAlloca implementation

In opposite to a new instruction, we can allocate memory to store an array. In Java-type languages, the arrays are allocated as objects. IRAlloca has this purpose, allocating memory for an array. And for that reason IRAlloca has a size value:

class IRAlloca : public IRExpr { public: /** * @brief Construct a new IRAlloca object, this kind of * expression creates "allocates" memory for an array * having this class will be useful also for allocating * memory in other architectures. * * @param result register or address where data will be stored * @param type_instance type to create an array * @param size size of the given array */ IRAlloca ( irexpr_t result , irexpr_t type_instance , irexpr_t size ); ~ IRAlloca () = default ; irexpr_t get_result () irexpr_t get_source_type (); irexpr_t get_size (); std :: string to_string (); bool equals ( iralloca_t alloca ); friend bool operator == ( IRAlloca & , IRAlloca & ); private: //! register or variable where result will be stored. irexpr_t result ; //! type which it will create a new instance irexpr_t type_instance ; //! size of the allocated space irexpr_t size ; };

IRType implementation

Finally, we arrive to the objects that represent the operands of the operations, the memory, the classes, the fields, etc. For that reason, IRType contains an enum that specify the type:

enum type_t { REGISTER_TYPE = 0 , TEMP_REGISTER_TYPE , CONST_INT_TYPE , CONST_FLOAT_TYPE , FIELD_TYPE , MEM_TYPE , STRING_TYPE , CLASS_TYPE , CALLEE_TYPE , FUNDAMENTAL_TYPE , NONE_TYPE = 99 };

An idea I had was to store the order of the memory access. Commonly architectures like x86, x86-64 stores the memory in a format known as little-endian , but other architectures can store the data in big-endian , even the crazy idea of a middle-endian exists!!! You can read more about endianness at wikipedia:

enum mem_access_t { LE_ACCESS = 0 , //! little-endian access BE_ACCESS , //! big-endian access ME_ACCESS , //! This shouldn't commonly happen? NONE_ACCESS = 99 };

The instruction stores the type, a name to represent the type, even a field for annotations to store and show from the type.

class IRType : public IRExpr { public: /** * @brief Constructor of the IRType, this will be the generic type used for the others. * @param type: type of the class. * @param op_type: global type of operation * @param type_name: name used for representing the type while printing. * @param type_size: size of the type in bytes. * @return void */ IRType ( type_t type , op_type_t op_type , std :: string type_name , size_t type_size ); ~ IRType () = default ; std :: string get_type_name (); size_t get_type_size (); type_t get_type (); virtual std :: string get_type_str (); mem_access_t get_access (); void write_annotations ( std :: string annotations ); std :: string read_annotations (); std :: string to_string (); bool equal ( irtype_t type ); friend bool operator == ( IRType & , IRType & ); private: //! type value as a type_t type_t type ; //! name used to represent the type in IR representation. std :: string type_name ; //! size of the type, this can vary depending on architecture //! and so on. size_t type_size ; //! annotations are there for you to write whatever you want std :: string annotations ; };

IRReg implementation

The IRs commonly operate with values or with registers. These are the basic types in the operations. The registers can be those from the architecture using the name of that computer architecture, or like in SSA-form IRs we can use an infinite set of registers. Compilers when they transform from IR to assembly apply different optimizations to reduce the number of registers, and then to assign the registers from the IR, to those from the real machine. In the case of virtual architectures like dalvik, the VM has 64K registers for each called method, since these are virtual registers. The registers of MjolnIR contain an id, and depending on the architecture, the id can represent one register from that architecture or not. Although I didn’t use it, following Triton’s implementation, I created the next types (but I didn’t ever use them…):

const int x86_arch = 1 ; enum x86_regs_t /** * @brief X86 registers, enums for IR, sizes and strings. */ { // General purpose registers rax , eax , ax , ah , al , rbx , ebx , bx , bh , bl , rcx , ecx , cx , ch , cl , rdx , edx , dx , dh , dl , // pointer registers rdi , edi , di , rsi , esi , si , // stack registers rbp , ebp , bp , rsp , esp , sp , // program counter rip , eip , ip , // extended registers in x86-64 r8 , r8d , r8w , r8b , r9 , r9d , r9w , r9b , r10 , r10d , r10w , r10b , r11 , r11d , r11w , r11b , r12 , r12d , r12w , r12b , r13 , r13d , r13w , r13b , r14 , r14d , r14w , r14b , r15 , r15d , r15w , r15b , // flags for state representation eflags , mm0 , mm1 , mm2 , mm3 , mm4 , mm5 , mm6 , mm7 , zmm0 , zmm1 , zmm2 , zmm3 , zmm4 , zmm5 , zmm6 , zmm7 , zmm8 , zmm9 , zmm10 , zmm11 , zmm12 , zmm13 , zmm14 , zmm15 , zmm16 , zmm17 , zmm18 , zmm19 , zmm20 , zmm21 , zmm22 , zmm23 , zmm24 , zmm25 , zmm26 , zmm27 , zmm28 , zmm29 , zmm30 , zmm31 , mxcsr , cr0 , cr1 , cr2 , cr3 , cr4 , cr5 , cr6 , cr7 , cr8 , cr9 , cr10 , cr11 , cr12 , cr13 , cr14 , cr15 , cs , ds , es , fs , gs , ss , dr0 , dr1 , dr2 , dr3 , dr6 , dr7 }; static const std :: unordered_map < x86_regs_t , size_t > x86_regs_size = { { rax , 8 }, { eax , 4 }, { ax , 2 }, { ah , 1 }, { al , 1 }, { rbx , 8 }, { ebx , 4 }, { bx , 2 }, { bh , 1 }, { bl , 1 }, { rcx , 8 }, { ecx , 4 }, { cx , 2 }, { ch , 1 }, { cl , 1 }, { rdx , 8 }, { edx , 4 }, { dx , 2 }, { dh , 1 }, { dl , 1 }, { rdi , 8 }, { edi , 4 }, { di , 2 }, { rsi , 8 }, { esi , 4 }, { si , 2 }, { rip , 8 }, { eip , 4 }, { ip , 2 }, { r8 , 8 }, { r8d , 4 }, { r8w , 2 }, { r8b , 1 }, { r9 , 8 }, { r9d , 4 }, { r9w , 2 }, { r9b , 1 }, { r10 , 8 }, { r10d , 4 }, { r10w , 2 }, { r10b , 1 }, { r11 , 8 }, { r11d , 4 }, { r11w , 2 }, { r11b , 1 }, { r12 , 8 }, { r12d , 4 }, { r12w , 2 }, { r12b , 1 }, { r13 , 8 }, { r13d , 4 }, { r13w , 2 }, { r13b , 1 }, { r14 , 8 }, { r14d , 4 }, { r14w , 2 }, { r14b , 1 }, { r15 , 8 }, { r15d , 4 }, { r15w , 2 }, { r15b , 1 } }; static const std :: unordered_map < x86_regs_t , std :: string > x86_regs_name = { { rax , "rax" }, { eax , "eax" }, { ax , "ax" }, { ah , "ah" }, { al , "al" }, { rbx , "rbx" }, { ebx , "ebx" }, { bx , "bx" }, { bh , "bh" }, { bl , "bl" }, { rcx , "rcx" }, { ecx , "ecx" }, { cx , "cx" }, { ch , "ch" }, { cl , "cl" }, { rdx , "rdx" }, { edx , "edx" }, { dx , "dx" }, { dh , "dh" }, { dl , "dl" }, { rdi , "rdi" }, { edi , "edi" }, { di , "di" }, { rsi , "rsi" }, { esi , "esi" }, { si , "si" }, { rip , "rip" }, { eip , "eip" }, { ip , "ip" }, { r8 , "r8" }, { r8d , "r8d" }, { r8w , "r8w" }, { r8b , "r8b" }, { r9 , "r9" }, { r9d , "r9d" }, { r9w , "r9w" }, { r9b , "r9b" }, { r10 , "r10" }, { r10d , "r10d" }, { r10w , "r10w" }, { r10b , "r10b" }, { r11 , "r11" }, { r11d , "r11d" }, { r11w , "r11w" }, { r11b , "r11b" }, { r12 , "r12" }, { r12d , "r12d" }, { r12w , "r12w" }, { r12b , "r12b" }, { r13 , "r13" }, { r13d , "r13d" }, { r13w , "r13w" }, { r13b , "r13b" }, { r14 , "r14" }, { r14d , "r14d" }, { r14w , "r14w" }, { r14b , "r14b" }, { r15 , "r15" }, { r15d , "r15d" }, { r15w , "r15w" }, { r15b , "r15b" } };

The first implementation of MjolnIR wasn’t using an SSA form but directly used the values from Dalvik. Later I implemented the SSA form, and for that the IRReg contains a sub_id field, to express the SSA form in the registers:

class IRReg : public IRType { public: /** * @brief Constructor of IRReg type. * @param reg_id: id of the register this can be an enum if is a well known register, or just an id. * @param current_arch: curreng architecture to create the register. * @param type_name: string for representing the register. * @param type_size: size of the register. * @return void */ IRReg ( std :: uint32_t reg_id , int current_arch , std :: string type_name , size_t type_size ); /** * @brief Constructor of IRReg type. * @param reg_id: id of the register this can be an enum if is a well known register, or just an id. * @param reg_sub_id: sub id of the register used in the SSA form. * @param current_arch: curreng architecture to create the register. * @param type_name: string for representing the register. * @param type_size: size of the register. * @return void */ IRReg ( std :: uint32_t reg_id , std :: int32_t reg_sub_id , int current_arch , std :: string type_name , size_t type_size ); ~ IRReg () = default ; std :: uint32_t get_id (); std :: uint32_t get_sub_id (); int get_current_arch (); std :: string get_type_str (); mem_access_t get_access (); std :: string to_string (); bool same ( irreg_t reg ); bool equal ( irreg_t reg ); friend bool operator == ( IRReg & , IRReg & ); private: //! id of the register, this will be an enum //! in case the arquitecture contains a known set //! of registers, for example x86-64 will have a //! well known set of registers, e.g. EAX, AX, RSP //! RIP, etc. //! Other arquitectures like DEX VM will not have //! an specific set. std :: uint32_t id ; //! sub id of the register, this sub id will be used //! in the SSA form, and used to check if a register //! is the same than other. std :: int32_t sub_id ; int current_arch ; };

IRTempReg implementation

In some cases, the instructions implement intrinsic operands, an IR instead of representing instrinsic operands it can use registers. In MjolnIR for doing so, I implemented temporal registers. For example, if a language uses 3 operands like the next:

int x = 2 ; int y = 1 ; int z = 5 ; int d = x + y + z ;

Commonly an IR instruction only contains two operands, and then the result value. For that reason we can use a temporal register to represent the previous sequence of instructions in the next way:

int x = 2 ; int y = 1 ; int z = 5 ; temp_reg1 = x + y ; int d = temp_reg1 + z ;

Now the instruction only has two operands. For doing these operations, MjolnIR uses IRTempReg operands:

class IRTempReg : public IRType { public: /** * @brief Constructor of IRTempReg type. * @param reg_id: id of the register this will be an incremental id. * @param type_name: string for representing the register. * @param type_size: size of the register. * @return void */ IRTempReg ( std :: uint32_t reg_id , std :: string type_name , size_t type_size ); ~ IRTempReg () = default ; std :: uint32_t get_id (); std :: string get_type_str (); mem_access_t get_access (); std :: string to_string (); bool equal ( irtempreg_t temp_reg ); friend bool operator == ( IRTempReg & , IRTempReg & ); private: //! This id will be just an incremental number //! as these are temporal registers. std :: uint32_t id ; };

IRConstInt implementation

This object is just a wrapper for constant integer values. It is also used to store metadata like if the value is signed or not, and the byte order (this maybe was a foolish decision on my side…). Since a possible optimization for obfuscated values was constant folding, I implemented the different operators on the class. The operators internally check if the values are signed or unsigned, and finally return another IRConstInt with the proper size:

IRConstInt operator + ( IRConstInt & a , IRConstInt & b ) { if ( a . is_signed ) { int64_t result = static_cast < int64_t > ( a . value ) + static_cast < int64_t > ( b . value ); IRConstInt res ( result , a . is_signed , a . byte_order , a . get_type_name (), a . get_type_size ()); return res ; } uint64_t result = a . value + b . value ; IRConstInt res ( result , a . is_signed , a . byte_order , a . get_type_name (), a . get_type_size ()); return res ; }

Next, the implementation of IRConstInt :

class IRConstInt : public IRType { public: /** * @brief Constructor of IRConstInt this represent any integer used in the code. * @param value: value of the constant integer * @param is_signed: is signed value (true) or unsigned (false). * @param byte_order: byte order of the value. * @param type_name: name used for representing the value. * @param type_size: size of the integer. * @return void */ IRConstInt ( std :: uint64_t value , bool is_signed , mem_access_t byte_order , std :: string type_name , size_t type_size ); ~ IRConstInt () = default ; bool get_is_signed (); std :: string get_type_str (); mem_access_t get_access (); uint64_t get_value_unsigned (); int64_t get_value_signed (); std :: string to_string (); bool equal ( irconstint_t const_int ); friend bool operator == ( IRConstInt & , IRConstInt & ); friend IRConstInt operator + ( IRConstInt & a , IRConstInt & b ); friend IRConstInt operator - ( IRConstInt & a , IRConstInt & b ); friend IRConstInt operator / ( IRConstInt & a , IRConstInt & b ); friend IRConstInt operator * ( IRConstInt & a , IRConstInt & b ); friend IRConstInt operator % ( IRConstInt & a , IRConstInt & b ); friend IRConstInt operator & ( IRConstInt & a , IRConstInt & b ); friend IRConstInt operator | ( IRConstInt & a , IRConstInt & b ); friend IRConstInt operator ^ ( IRConstInt & a , IRConstInt & b ); friend IRConstInt operator << ( IRConstInt & a , IRConstInt & b ); friend IRConstInt operator >> ( IRConstInt & a , IRConstInt & b ); friend IRConstInt operator ++ ( IRConstInt & a , int ); friend IRConstInt operator -- ( IRConstInt & a , int ); friend IRConstInt operator ! ( IRConstInt & a ); friend IRConstInt operator ~ ( IRConstInt & a ); private: //! Value of the integer std :: uint64_t value ; //! Check to know if the constant is a unsigned //! or signed value. bool is_signed ; //! byte order of the value. mem_access_t byte_order ; };

IRMemory implementation

Memory operands are specified as the memory address, an offset, a byte order and a size.

class IRMemory : public IRType { public: /** * @brief IRMemory constructor this represent a memory address with accessed offset and size. * @param mem_address: address of the memory. * @param offset: offset accessed (commonly 0). * @param byte_order: byte order of the memory (LE, BE, ME?). * @param type_name: memory representation with a string. * @param type_size: size of the memory. * @return void */ IRMemory ( std :: uint64_t mem_address , std :: int32_t offset , mem_access_t byte_order , std :: string type_name , size_t type_size ); ~ IRMemory () = default ; std :: uint64_t get_mem_address (); std :: int32_t get_offset (); std :: string get_type_str (); mem_access_t get_access (); std :: string to_string (); bool equal ( irmemory_t memory ); friend bool operator == ( IRMemory & , IRMemory & ); private: //! accessed address std :: uint64_t mem_address ; //! offset of the memory accessed std :: int32_t offset ; //! byte order of the memory. mem_access_t byte_order ; };

IRString implementation

Languages like C or C++, represent strings as constant strings in read-only memory, and then a pointer is used to access that data. Java-type languages use String objects. This operand is used to represent those string constant values:

class IRString : public IRType { public: /** * @brief Constructor of IRString class, this represent strings used in code. * @param str_value: value of that string. * @param type_name: some meaninful string name. * @param type_size: size of the type (probably here string length) * @return void */ IRString ( std :: string str_value , std :: string type_name , size_t type_size ); ~ IRString () = default ; std :: string get_str_value (); std :: string get_type_str (); mem_access_t get_access (); std :: string to_string (); bool equal ( irstring_t str ); friend bool operator == ( IRString & , IRString & ); private: //! string value, probably nothing more will be here std :: string str_value ; };

IRClass implementation

For instructions like IRNew a class is provided as parameter to specify the object type of the created object. IRClass just stores the fully qualified name of the class.

class IRClass : public IRType { public: /** * @brief Constructor of IRClass, this represent the name of a class * that is assigned as a type. * @param class_name: name of the class. * @param type_name: should be the same value than previous one. * @param type_size: should be 0. * @return void */ IRClass ( std :: string class_name , std :: string type_name , size_t type_size ); ~ IRClass () = default ; std :: string get_class (); std :: string get_type_str (); mem_access_t get_access (); std :: string to_string (); bool equal ( irclass_t class_ ); friend bool operator == ( IRClass & , IRClass & ); private: //! class name including path, used for instructions //! of type const-class std :: string class_name ; };

IRCallee implementation

In binaries created from languages like C or C++, a call specifies a register or an address where to jump, and if there are no symbols, an analysis must be done to obtain how many parameters are passed to the call. In Java-type languages, these called methods are specified by class, name and the description of the method. That description contains the return type and the parameters. MjolnIR was thought to support both, but I implemented for Dalvik, so I had all the method descriptions. IRCallee stores all that information:

class IRCallee : public IRType { public: /** * @brief Constructor of IRCallee this represent any function/method called by a caller! * @param addr: address of the function/method called (if available). * @param name: name of the function/method called (if available). * @param class_name: name of the class from the method called (if available). * @param n_of_params: number of the parameters for the function/method (if available). * @param description: description of the parameters from the function/method (if available). * @param type_name: some meaninful string name. * @param type_size: size of the type (probably here 0) * @return void */ IRCallee ( std :: uint64_t addr , std :: string name , std :: string class_name , int n_of_params , std :: string description , s