Our goal in this paper was to examine how exposure to misinformation online during the 2020 election compared with exposure during the 2016 election. By adopting the analytical approach of Guess et al.6, who examined exposure to untrustworthy websites during the 2016 election, we assessed exposure to untrustworthy websites among a nationally representative sample during the 2020 election and compared it with 2016 exposure. Our web browsing data containing 7.5 million URLs represent observations of N = 1,151 Americans’ real-world media usage during the course of real-world political events. Our analysis does not rely on self-reported media exposure measures, which tend to be inaccurate compared with passively tracked behavioural measures of news exposure27,28.

Overall, we found that a significantly smaller percentage of Americans were exposed to untrustworthy websites in 2020 (26.2%) compared with 2016 (44.3%). We found this decrease despite using a database of untrustworthy sites over three times the size of the database used in Guess et al.6 to identify visits to untrustworthy websites in our participants’ web browsing behaviour, which increased our capacity to detect visits to untrustworthy websites. This decline runs contrary to expectations that the run up to the 2020 election would lead to record numbers of people being exposed to misinformation11 (but also for reasons to predict lower misinformation exposure in 2020, see refs. 17,19,21). We also observed that those who did visit untrustworthy websites in 2020 tended to visit fewer untrustworthy sites overall and spent less time on average on each site than in 2016.

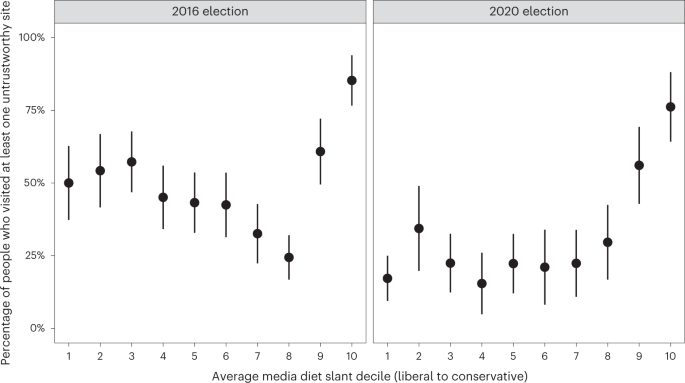

In 2016, certain groups were more likely than others to visit untrustworthy websites. Older adults were found to be more likely to visit untrustworthy sites than younger adults, supporters of Donald Trump were more likely than supporters of Hillary Clinton, and those with more ideologically extreme media diets were more likely than those with ideologically moderate media diets. We found that these groups were still more likely to visit untrustworthy websites in 2020 (in 2020, Joe Biden supporters took the place of Hillary Clinton supporters). However, the groups’ levels of exposure were significantly lower in 2020 than in 2016. While it is encouraging that the likelihood of encountering untrustworthy websites appears to be declining over time for older adults, Trump supporters and those with ideologically extreme media diets, the data re-affirm the need to support these groups in terms of future research and the provision of resources to build resilience to misinformation.

Finally, we found differences in how people came to visit untrustworthy websites in 2020 compared with 2016. Specifically, Facebook and webmail referred significantly fewer visits to untrustworthy websites in 2020 than in 2016. While further research is needed to elucidate these platforms’ changing role in propagating misinformation (see below), our findings suggest that Facebook and webmail may have played a smaller role in directing people to misinformation on the web in the 2020 election compared with in 2016.

While our data suggest that exposure to untrustworthy websites declined at the population level, our results should not be interpreted as indicating that misinformation is somehow less of a problem than it was previously. While exposure was lower in 2020 than in 2016, many people were still exposed to untrustworthy sites. Extrapolating our results suggests that nearly 68 million Americans made a total of 1.5 billion visits to untrustworthy sites during the 2020 election. Furthermore, even if a smaller percentage of Americans were exposed to misinformation online in 2020, those exposures could have played a larger role in radicalization or influencing participation in acts of political violence (for example, the 6 January 2021 insurrection). Altogether, exposure to fewer people can still have serious consequences29. While our data and approach are limited in their ability to speak to the consequences of exposure, it will be essential for future scholarship on misinformation to consider both exposure to misinformation and the effect of that exposure at the population and individual levels.

We make several contributions to the misinformation literature. First, our study demonstrates the value of re-applying the same analytical approach of prior work to examine changes in a mediated process (for example, exposure to untrustworthy websites) longitudinally. By collecting the same data (URLs visited during web browsing) from the same source (YouGov Pulse) among the same population (nationally representative sample of American adults) for a similar period of time around the US presidential election as Guess et al.6, we were able to make direct comparisons of exposure to untrustworthy websites between 2016 and 2020. The apples-to-apples comparisons afforded by this approach allowed us to precisely examine how the patterns in untrustworthy website exposure identified in 2016 changed in 2020. In addition, we incorporated improvements to Guess et al.’s6 approach into our analysis that accounted for differences between the 2016 and 2020 media environments. For example, we introduced NewsGuard’s database of sites that repeatedly publish false content as an additional source to identify visits to untrustworthy websites in people’s web browsing. One reason the introduction of NewsGuard was important is that the untrustworthy website databases used by Guess et al.6 were primarily based on websites circulating during the 2016 election, but fake news websites are often ephemeral (that is, the domains go defunct after short periods of time30,31). NewsGuard’s database (which is updated weekly) allowed us to have more confidence that our database of untrustworthy sites was sufficiently up to date to match changes in the fake news ecosystem that have occurred since 2016.

Second, our findings indicate that the same groups who were more likely to visit untrustworthy websites in 2016 were largely the same more likely to do so in 2020. The persistence of these trends highlights the importance of examining why populations such as older adults appear to be more susceptible to online misinformation and how they can be supported through interventions and other resources to build resilience to misinformation32,33. Our updated findings, which reveal that approximately one-third of the older adults in our sample were exposed to untrustworthy websites, make clear that it is important to continue studying the factors responsible for older adults’ vulnerability.

In addition to this pattern among older adults, the 2016 pattern of conservatives being more likely than liberals to visit untrustworthy sites persisted in 2020. Research has begun to identify why conservative individuals appear more likely to engage with misinformation online34. One reason might be that the supply of misinformation is greater on the ideological right than on the left35,36. Relatedly, it could be that more ideologically conservative media diets are more likely to expose users to misinformation via features such as algorithmic curation and community structures relative to liberal media diets. The left may also be more likely to circulate misinformation via modalities other than website links (for example, social media posts, memes and other image-based formats) that are more difficult to detect using URL-based web tracking methods. Our results suggest an ongoing need for future work to investigate these potential causes.

Third, we provide evidence that Americans’ visits to untrustworthy websites were less likely to be referred by Facebook in 2020 than 2016. This finding is noteworthy given the amount of scrutiny from members of Congress and the American public towards platforms’, such as Facebook, roles in the proliferation of misinformation37,38. Future work should endeavour to better understand why Facebook’s role in referring people to untrustworthy websites appears to be shrinking. Does it indicate the efficacy with which Facebook is implementing programmes and policies to label or flag untrustworthy content? Or might it suggest a more fundamental behavioural change in how people use Facebook and other social media platforms? For instance, people may be less likely to click links to external websites now than in the past, preferring to stay on the platform.

Finally, our findings can inform policymaking and public discourse around misinformation. Our findings re-affirm that certain groups of people are more likely to encounter misinformation, suggesting a need for more focused and directed policy initiatives centring on groups with the greatest need for support in dealing with misinformation. Moreover, our findings join others suggesting that the attention and discussion that the media, politicians and the public devote to fake news may be disproportionate to the extent that people are actually exposed to it7,24. Given evidence that (1) the overwhelming majority of news consumed by the population is not misinformation3 and (2) that exposure to discourse around fake news can erode individuals’ trust in news media25, we may need to consider the nature of the attention society pays to the problem of misinformation relative to other ongoing national and international crises. For example, the emphasis on misinformation present in the media and political discourse since 2016 may be partly to blame for the ongoing erosion of trust in media institutions occurring in the United States and worldwide39. Our findings call for the need for more research but also more grounded communication of that research to appropriately contextualize this phenomenon.

Of course, our study should be interpreted in light of its limitations. The most noteworthy limitations relate to our URL logging methodology to estimate untrustworthy website exposure. First, untrustworthy websites were operationalized at the domain level. That is, in our data, untrustworthy websites were considered web domains that NewsGuard rated as repeatedly hosting false information (or imported from the database used by Guess et al.6) (for example, www.obamawatcher.com), rather than specific web pages or articles (for example, www.obamawatcher.com/2020/03/michelles-fake-degrees). This operationalization is largely because most URLs captured by YouGov only contain domains and not full URLs to protect participant privacy. While many studies examining exposure to misinformation have taken this approach3, there is undoubtedly misinformation hosted on domains that do not repeatedly publish false information but may occasionally publish false information. Domain-level measurements of exposure do not capture these specific instances of misinformation.

A second limitation of URL-based browsing data is that it only identifies content that leads to a URL being produced40. Crucially, this limitation means that for web pages that display content dynamically while maintaining a static URL, we only know that a person visited that static URL but not information about any of the content they saw while on that static URL. Take, for example, Facebook’s feed. When a user visits www.facebook.com and is presented with their feed, that user can scroll through their feed and that does not result in the active URL in their web browser, www.facebook.com, changing. Thus, while an individual may be exposed to a variety of (mis)information while scrolling their feed (in either posts generated or links shared by others), we only observe instances in which individuals actually click on an external link that takes them away from their feed to a new website.

Additionally, participants collected these URL data by installing plugins in their web browsers. Thus, we only capture individuals’ behaviours within web browsers. Individuals’ online behaviours outside of web browsers, such as through apps, do not appear in our dataset. This nuance may be especially relevant for mobile internet use, which is more likely to occur via mobile apps than mobile web browsers41. Indeed, among our participants, we found that more individuals were exposed to an untrustworthy website on desktop/laptop computers (34.2%; 95% CI 29.4% to 39.1%) than on smartphones and tablets (13%; 95% CI 8.9% to 17.2%). As the consumption of news and political information increasingly occurs on mobile devices, it becomes all the more important for researchers to invest in methods that allow for the collection of mobile browsing and app-use data to increase the validity of inferences about online news exposure42.

It is important to note that these limitations affect both our 2020 data collection and Guess et al.’s6 2016 data collection. Nevertheless, it is crucial for future research on misinformation exposure to contend with the limitations of web browsing data. Only social media companies ultimately possess the data on user behaviour that could most accurately shed light on why their role in referring individuals to untrustworthy websites appears to be decreasing over time. Unfortunately, social media platforms rarely share data with misinformation researchers43. While initiatives such as Social Science One have attempted to grant scholars access to data from social media platforms such as Facebook44, getting academic researchers access to platform data has proven difficult45,46. The limitations of our study and those that other misinformation research face highlight the importance of social media platforms working with academic and other third-party researchers to better understand the complex dynamics of exposure to and engagement with (mis)information on their platforms.

That said, other current data collection approaches could help fill in the gaps in online behaviour missed by the URL logging method. For example, Screenomics captures screenshots from individuals’ devices to understand their moment-by-moment smartphone usage, including the use of apps and information contained within system notifications47,48. The Screenomics method has been used to study exposure to political information49. Future research on exposure to online misinformation should triangulate across several data sources to gain a more complete portrait of individuals’ online media use and the role that misinformation plays in it.

Our findings indicate a relatively across-the-board decline in exposure to untrustworthy websites from 2016 to 2020, but why does this decline occur? We offer a few candidate explanations, but future work and additional data will be needed to test them more directly.

First, exposure to misinformation could have been more likely to be displaced in 2020 than in 2016 to other locations outside the web browser, such as text messaging or emergent social media apps such as WhatsApp and TikTok. Indeed, people increasingly report getting their news regularly via WhatsApp50 and social media platforms such as Reddit and TikTok51, and there is concern about the spread of misinformation on these platforms52,53.

Second, the time frame of data collection used by Guess et al. (2020) and adopted by us (4 weeks before election day and 1 week after election day) may have examined different misinformation dynamics around the 2020 and 2016 elections. Specifically, the 2020 election was marked by a post-election day period in which sitting president Donald Trump made a series of claims about election fraud that caused him to receive less votes than his opponent Joe Biden, culminating in the announcement that Joe Biden was the winner of the election on 7 November, which Donald Trump refused to concede. During this time, much misinformation about election fraud circulated online54. Comparatively, the aftermath of the 2016 election may not have been as rife with online misinformation as the aftermath of the 2020 election. However, because Guess et al.’s (2020) (and thus our) data collection period included more time before the election than after, we may have missed some of the misinformation relevant to the 2020 election outcome that was not present in 2016. This demonstrates that when comparing the effects of events on media consumption behaviours, even similar events (for example, presidential elections) may feature different dynamics at different points in time.

Third, as we pointed out in the limitations section, URL-tracking methods only log instances in which a URL is actually clicked and visited. Visits to untrustworthy websites may have decreased from 2016 to 2020 because people were increasingly exposed to untrustworthy website content within dynamic URLs, for example, scrolling through the Facebook feed or Twitter timeline. Indeed, evidence suggests that relatively few people click on news links posted on social media websites55,56 yet can be influenced by the information in headlines57. This propensity to stay on the platform over clicking to visit external websites also may be increasing over time58. Such a change in user behaviour could also help explain why we found that Facebook played a smaller role in referring people to untrustworthy websites in 2020 than in 2016.

There are two explanations for the decline in exposure to untrustworthy websites from 2016 to 2020 that we can address with our data. First, it is possible that the decline in untrustworthy website consumption from 2016 to 2020 reflected a broader decline in online news consumption overall, both credible and untrustworthy. Using the news websites contained in Bakshy et al.26 and those rated by NewsGuard as not repeatedly publishing false content, we find that Americans’ exposure to hard news websites in 2016 did not significantly differ from exposure to hard news sites in 2020. The number of Americans exposed to at least one hard news site, the average number of hard news sites accessed, and the average amount of time spent on hard news sites were similar in 2016 and 2020 (Pages 5–8 of the Supplementary Materials). These results suggest that untrustworthy news exposure uniquely declined from 2016 to 2020 while exposure to trustworthy news remained constant.

Another possibility is that consumption of untrustworthy websites fell from 2016 to 2020 because individuals’ use of online fact-checking resources increased during that time. Exposure to fact-checking resources can reduce people’s engagement with misinformation online59,60,61,62,63, and using fact checks is a strategy commonly taught in effective digital media literacy interventions64,65. During the 2016 election, Guess et al.6 found that 25.3% (95% CI 22.5% to 28.2%) of Americans visited a fact-checking website. During the 2020 election, we estimate that 13.1% (95% CI 11.2% to 15.2%) of Americans visited a fact-checking site, around half as many as in 2016 (Supplementary Fig. 4). In 2016, approximately 42% of those exposed to at least one untrustworthy website were exposed to at least one fact-checking website. In 2020, this number fell to less than 30%. In sum, it does not appear that the use of fact-checking websites increased from 2016 to 2020, either among those exposed to misinformation or the population as a whole, casting doubt on the idea that exposure to misinformation decreased from 2016 to 2020 because the use of fact-checking resources increased during that time.

In this paper, we provide evidence that exposure to untrustworthy websites decreased from 2016 to 2020. More research is needed to understand the factors explaining this change, but our results represent an important update to our understanding of exposure to online misinformation. The groups most likely to be exposed in 2016 are much the same groups who were more likely exposed in 2020, justifying a more focused approach to research on and support for those groups in dealing with misinformation. While one could interpret our findings as evidence that the problem of online misinformation is improving in some way, they could also be interpreted as evidence that the nature of the problem is changing. Our work provides some initial insights into where researchers can start looking to understand the changing dynamics of online misinformation exposure.